- [1] X.Meng,X.Wang,S.Yin,andH.Li,(2023)“Few-shot image classification algorithm based on attention mechanism and weight fusion "Journal of Engineering and Applied Science70(1):14.DOI:10.1186/s44147-023-00186-9.

- [2] L. Wang, H. Wang, S. Yin, and L. Wang, (2025) “Masked vision transformer for fast hyperspectral image classification" IEEE Transactions on Geoscience and Remote Sensing 63: DOI: 10.1109/TGRS.2025.3572242.

- [3] Y. Wang, Y. Deng, Y. Zheng, P. Chattopadhyay, and L. Wang, (2025) “Vision transformers for image classification: A comparative survey" Technologies 13(1): 32. DOI: 10.3390/technologies13010032.

- [4] H. Song, H. Xie, Y. Duan, X. Xie, F. Gan, W. Wang, and J. Liu,(2025) “Puredatacorrection enhancing remote sensing image classification with a lightweight ensemble model" Scientific Reports 15(1): 5507. DOI: 10.1038/s41598-025-89735-1.

- [5] Y. Shao, J. Yang, W. Zhou, H. Sun, and Q. Gao, (2025) “Fractal-Inspired Region-Weighted Optimization and Enhanced Mobile Net for Medical Image Classification" Fractal and Fractional 9(8): 511. DOI: 10.3390/fractalfract9080511.

- [6] Q. Du, Z. Liu, Y. Song, N. Wang, Z. Ju, and S. Gao, (2025) “A lightweight dendritic shufflenet for medical image classification" IEICE Transactions on Information and Systems: 2024EDP7059. DOI: 10.1587/transinf.2024EDP7059.

- [7] G. Sangar and V. Rajasekar, (2025) “Optimized classification of potato leaf disease using Efficient Net-LITE and KE-SVM in diverse environments" Frontiers in plant science 16: 1499909. DOI: 10.3389/fpls.2025.1499909.

- [8] S. Zheng and Y. Wang, (2025) “SF Net: A Pyramid Based Feature Fusion Convolutional Neural Network With Embedded Squeeze-and-Excitation Mechanism for Retinal OCT Image Classification" International Journal of Imaging Systems and Technology 35(5): e70197. DOI: 10.1002/ima.70197.

- [9] C. Zhuang, X. Yuan, L. Gu, Z. Wei, Y. Fan, and X. Guo, (2025) “Frequency Regulated Channel-Spatial Attention module for improved image classification" Ex pert Systems with Applications 260: 125463. DOI: 10.1016/j.eswa.2024.125463.

- [10] R. Shang, M. Hu, J. Feng, W. Zhang, and S. Xu, (2025) “A lightweight PolSAR image classification algorithm based on multi-scale feature extraction and local spatial information perception" Applied Soft Computing 170: 112676. DOI: 10.1016/j.asoc.2024.112676.

- [11] T. Jinaga, B. Banothu, S. Nickolas, and G. R. Patil, (2025) “An Adaptive Lightweight Sequence Space Model for Medical Image Classification" SN Computer Science 6(7): 892. DOI: 10.1007/s42979-025-04387-2.

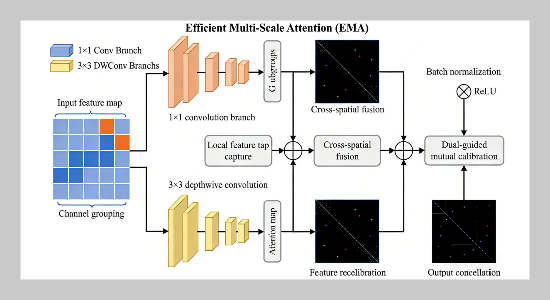

- [12] D. Ouyang, S. He, G. Zhang, M. Luo, H. Guo, J. Zhan, and Z. Huang. “Efficient multi-scale attention mod ule with cross-spatial learning”. In: ICASSP 2023 2023 IEEE international conference on acoustics, speech and signal processing (ICASSP). IEEE. 2023, 1–5. DOI: 10.1109/ICASSP49357.2023.10096516.

- [13] L. Liu, B. Zhou, Q. Li, G. Fu, Y. Wang, and H. Chu, (2025) “Parallel joint encoding for drone-view object detection under low-light conditions" Frontiers in Artificial Intelligence 8: 1622100. DOI: 10.3389/frai.2025.1622100.

- [14] B. Guan, G. Chu, Z. Wang, J. Li, and B. Yi, (2025) “Instance-level semantic segmentation of nuclei based on multimodal structure encoding" BMC bioinformatics 26(1): 42. DOI: 10.1186/s12859-025-06066-8.

- [15] R. Boukhenoun, H. Doghmane, K. Messaoudi, and E.-B. Bourennane, (2025) “Comparative Analysis of CNN Performances Using CIFAR-100 and MNIST Databases: GPU vs. CPU Efficiency" Recent Advances in Electrical & Electronic Engineering 18(10): 2025 2037. DOI: 10.2174/0123520965348453250226080233.

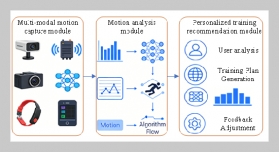

- [16] L. Teng, H. Li, and Y. Si, “Neural Tensor Network And Adaptive Graph Convolution For Sports" Journal of Applied Science and Engineering 29(6): 1483–1491. DOI: 10.6180/jase.202606_29(6).0015.

- [17] V. Pentsos, S. Tragoudas, K. Nagesh Gowda, and M. Schmit, (2026) “Improved Image Classification using Lightweight Deep Neural Network Enhancements" ACM Transactions on Intelligent Systems and Technology 17(1): 1–26. DOI: 10.1145/3779421.

- [18] S. Yin, L. Wang, T. Chen, H. Huang, J. Gao, J. Zhang, M. Liu, P. Li, and C. Xu, (2025) “LKAFormer: A lightweight kolmogorov-arnold transformer model for image semantic segmentation" ACM Transactions on Intelligent Systems and Technology: DOI: 10.1145/3759254.

- [19] Z. Liu, Z. Sun, Y. Zang, W. Li, P. Zhang, X. Dong, Y. Xiong, D. Lin, and J. Wang, (2026) “Rar: Retrieving and ranking augmentedmllms for visual recognition" IEEE Transactions on Image Processing 35: 388–401. DOI: 10.1109/TIP.2025.3644175.

- [20] A. Umamageswari, S. Deepa, and K. Raja. “Deep Learning and Image Processing for Cancer Cell Iden tification”. In: AI in Diagnostic Radiology: Clinical Ap plications and Case-Based Insights. IGI Global Scientific Publishing, 2026, 1–40. DOI: 10.4018/979-8-3373 5801-7.ch001.